Choosing between AI coding assistants is harder than it looks. Marketing pages all promise the same things — "faster code," "fewer bugs," "seamless integration" — and without a structured way to cut through that noise, you end up picking based on hype rather than fit. This post gives you a concrete evaluation framework across five dimensions: functional accuracy on real tasks, context window size, IDE and workflow integration, pricing structure, and data-handling policies. Work through each category and you'll know exactly where a tool earns its keep and where it falls short.

Functional Accuracy: Testing What Actually Matters for AI Coding Assistants

Accuracy benchmarks published by vendors measure performance on clean, isolated problems. Your codebase is not a benchmark. Real evaluation means throwing a tool at the messy, domain-specific work you actually do — legacy refactors, multi-file debugging, generating tests for poorly documented modules. The delta between benchmark scores and real-world performance is where most tools disappoint.

Single-Function Correctness vs. Multi-File Reasoning

A tool that autocompletes a sort function perfectly can still hallucinate method signatures when it has to reason across three files simultaneously. Test both. Write a small suite of self-contained problems to check raw correctness, then create a cross-file task — say, adding a new API endpoint that touches a router, a controller, and a database schema — and see how coherently the assistant handles the dependency chain. The failure modes are completely different, and you want to know about both before committing.

Hallucination Rate on Domain-Specific Libraries

General models are trained heavily on popular open-source packages. The moment you work with an internal SDK, a niche framework, or a recently released library version, hallucination risk spikes. Feed the assistant a real import from your stack that isn't widely represented on GitHub. If it confidently invents method names, that's a red flag with hard downstream costs — the bug might not surface until review or runtime.

Code Review and Explanation Quality

Generation is only half the job. Ask the tool to review a block of code you know contains a subtle race condition or an off-by-one error. Good AI coding assistants catch it and explain why. Mediocre ones praise the code and suggest style tweaks. This test is fast, costs you nothing, and reveals reasoning depth quickly.

Context Window: Why Size Is Not the Whole Story

A larger context window lets the assistant hold more of your codebase in working memory at once. That matters enormously for refactoring or understanding a sprawling module. But raw token count is misleading without knowing how the tool actually uses that context. Some models degrade in instruction-following when the relevant code is buried deep in a long prompt — a phenomenon documented in research on lost-in-the-middle degradation. Always test retrieval quality at the extremes of the stated window, not just the middle.

Effective Context vs. Nominal Context

Nominal context is the number printed in the spec sheet. Effective context is how much of that window the model reliably attends to when generating accurate completions. Run a test: place a critical function definition near the end of a large prompt and ask the assistant to call it correctly in a new snippet. If it fails, your practical working window is smaller than advertised. This distinction matters more as codebases grow.

Codebase Indexing and Retrieval

Some tools sidestep context limits with retrieval-augmented generation, indexing your entire repository and pulling relevant snippets at query time. This is often more practical than brute-forcing everything into one context window. Evaluate the quality of retrieval separately: does it surface the right file when you ask a conceptual question about a feature? Does it miss key dependencies? If you want a closer look at how modern tooling handles this at the IDE level, the CursorLens review covers how an open-source dashboard logs and audits exactly these retrieval decisions inside Cursor.

IDE and Workflow Integration

An assistant that requires you to copy-paste between a web interface and your editor is a productivity drain, full stop. Deep IDE integration — inline completions, inline diffs, chat anchored to your current file, terminal access — removes that friction and keeps you in flow. But integration quality varies wildly even among tools that claim native support for the same editor.

Inline Completion Latency

Latency above roughly 300–400 milliseconds starts to disrupt typing rhythm. Measure it under realistic conditions: your actual internet connection, during business hours when model APIs are under load. A tool that performs beautifully on a fiber connection at midnight may lag frustratingly during peak hours. This is not a theoretical concern — it directly affects adoption across a team.

Agentic and Multi-Step Task Support

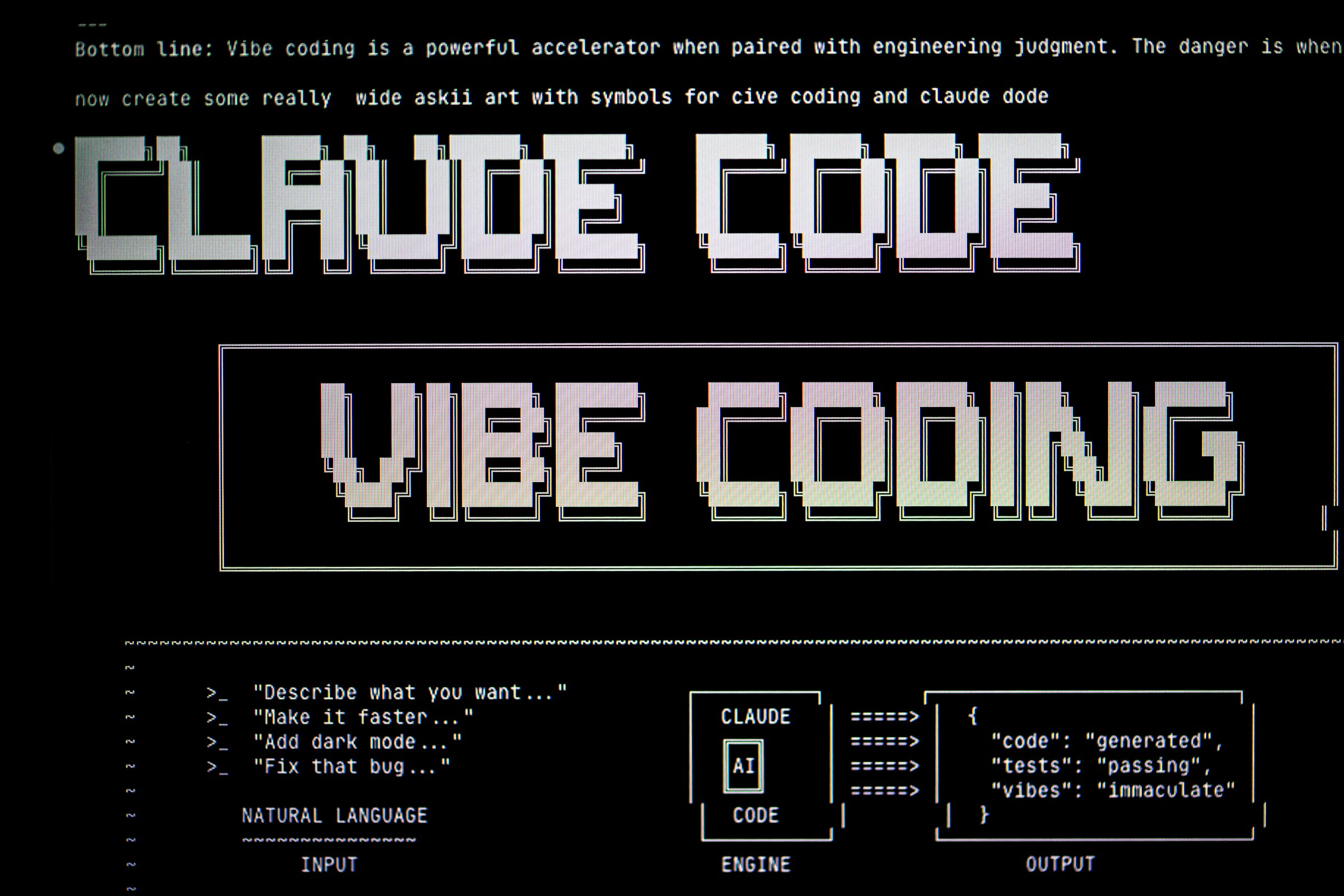

A growing category of AI coding assistants goes beyond autocomplete into agentic workflows: running tests, reading terminal output, iterating on a fix autonomously. This changes the evaluation criteria. For agentic tools you need to assess loop termination behavior (does it know when to stop?), error recovery (does it spiral on a failing test or adapt?), and scope discipline (does it touch files it shouldn't?). If you want a direct comparison of how leading tools handle these agentic capabilities, our Cursor vs GitHub Copilot vs Claude Code breakdown goes deep on exactly this dimension.

Team Collaboration Features

Individual productivity is the obvious sell, but team features matter too. Shared prompt libraries, usage dashboards, per-seat licensing controls, and the ability to set organization-wide model policies all affect whether a tool scales from one developer to fifty. Speaking of prompt libraries — a well-structured prompt repository can meaningfully improve the consistency of AI output across a team; the AI Prompt Library review explores how curated prompt collections work in practice for tools like this.

Pricing Structure: Total Cost of Ownership

Headline per-seat pricing rarely captures actual cost. Token consumption, model tier choices, and overage fees stack up fast in a large team. Before signing anything, map out a realistic monthly usage scenario: how many completions, how many chat turns, how many agentic runs per developer per day. Then model the cost at three team sizes — solo, small team, and 50+ seats. The tool that looks cheapest at one seat often has the worst unit economics at scale.

Free Tiers and Trial Depth

A free tier that caps you at fifty completions per month tells you almost nothing useful. Look for trials that let you run the tool at realistic production volume for at least two weeks. That's long enough to hit edge cases, develop muscle memory, and surface the latency and quality issues that don't appear in a 30-minute demo. If a vendor won't offer that, treat it as a data point about their confidence in the product.

Model Flexibility and Bring-Your-Own-Key Options

Some platforms let you supply your own API key for an underlying model (OpenAI, Anthropic, etc.), which can dramatically reduce cost if you already have favorable enterprise pricing with those providers. Others lock you into their hosted inference at a markup. Neither is inherently wrong, but the distinction affects your total cost calculation and your negotiating leverage at renewal time.

Data Handling and Security Policies

Code sent to a third-party AI service is often the most sensitive data a company produces. Before deploying any AI coding assistant across a team, you need clear answers on three questions: Is my code used to train future models? Where is it stored, and for how long? What are the data residency options? OWASP's LLM Top 10 lists training data poisoning and sensitive information disclosure among the leading risks for LLM-integrated applications — both are directly relevant here.

Zero Data Retention vs. Standard Policies

Zero data retention (ZDR) means your prompts and completions are not logged beyond the immediate inference call. This is a hard requirement in many regulated industries — healthcare, finance, defense contracting. If ZDR isn't available natively, check whether the vendor has a BAA process or enterprise data processing agreement that achieves an equivalent guarantee. Verbal assurances aren't enough; get it in writing in the subscription agreement.

On-Premises and Air-Gapped Deployment

For the most sensitive environments, cloud-based inference of any kind is a non-starter. Some AI coding assistant vendors offer self-hosted or on-premises deployment options — the model runs inside your own infrastructure, code never leaves your network. These deployments come with higher operational overhead and typically a steeper price tag, but for certain compliance regimes there's no alternative. Evaluate whether the vendor's self-hosted offering uses the same model as the cloud product or a smaller, older version; that gap matters for quality comparisons.

Evaluating AI coding assistants rigorously takes a few hours upfront, but it saves weeks of painful migration later. Treat each of these five dimensions — accuracy on your actual tasks, effective context window, integration depth, total cost of ownership, and data handling — as a separate scorecard. Weight them according to your team's priorities: a startup moving fast might rank integration and cost highest, while an enterprise team in a regulated industry might lead with data policy. Get those weights clear before you start testing, and the right choice becomes much easier to see.