Retrieval-augmented generation is the architecture most production AI apps actually use when they need to answer questions from private or frequently updated data. This guide walks through what RAG is, when it beats fine-tuning (and when it doesn't), how each stage of the pipeline works, and the mistakes that derail teams between prototype and production. By the end you'll have a concrete mental model of every moving part, plus the judgment to spot where a given system is likely to break.

What Retrieval-Augmented Generation Actually Is

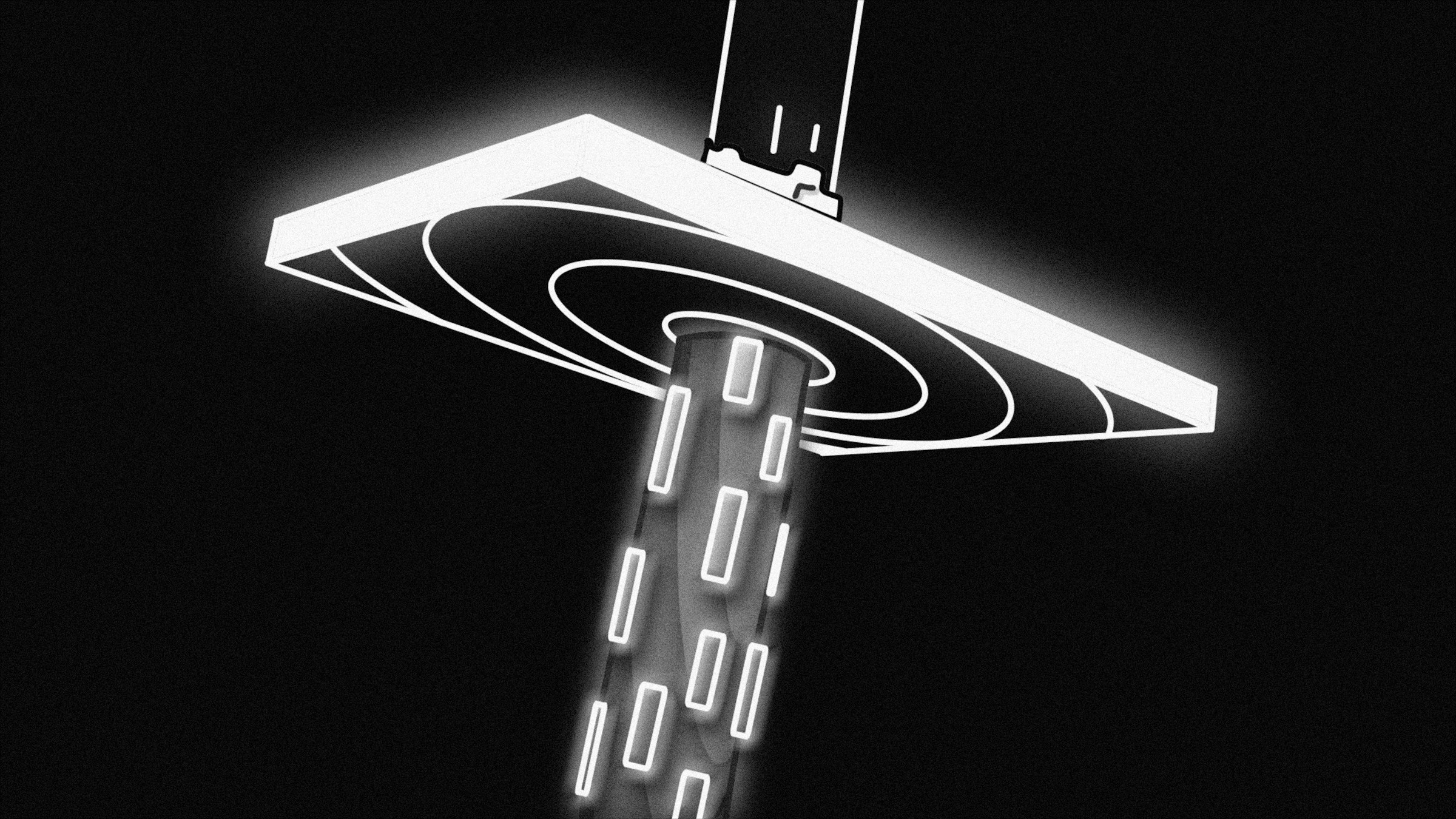

At its simplest, RAG splits a query into two phases: retrieve relevant context from an external knowledge store, then generate an answer using an LLM conditioned on that context. The LLM never has to memorize your proprietary data — it reads it at inference time, the same way a human analyst looks up a document before writing a report. Lewis et al. (2020) introduced the term and showed it cut hallucination rates substantially on knowledge-intensive tasks.

Why This Matters for Private Data

A general-purpose LLM knows nothing about your internal contracts, your product catalog from last Tuesday, or your company's support tickets. Fine-tuning can inject some of that knowledge, but the model still hallucinates on facts it saw rarely during training, and you have to retrain every time the data changes. RAG sidesteps both problems by keeping the knowledge external and live.

The Core Loop

A user submits a query. Your system converts that query into an embedding vector and searches a vector store for the top-k most semantically similar chunks. Those chunks are injected into a prompt alongside the original query. The LLM synthesizes an answer grounded in the retrieved text. That's the loop — every complexity in a production system is a refinement of one of those four steps.

RAG vs. Fine-Tuning: Choosing the Right Tool

This is the question teams wrestle with most often, and the honest answer is that they solve different problems. Fine-tuning changes how the model reasons and responds — its style, its domain vocabulary, its output format. RAG changes what facts the model has access to at inference time. They are not substitutes; many mature systems use both.

When RAG Wins

Use RAG when your knowledge base changes frequently (weekly product updates, new legal filings, evolving support docs). Use it when you need citations — RAG can return source chunks alongside the answer, making the system auditable. Use it when data volume is large and heterogeneous: a vector store scales to millions of documents far more cheaply than repeated fine-tuning runs. Tools like IngestAI are purpose-built for exactly this scenario, letting enterprise teams wire RAG pipelines to existing document repositories without standing up bespoke infrastructure from scratch.

When Fine-Tuning Wins

Fine-tuning is better when you need the model to adopt a specific output schema reliably, speak a technical dialect fluently, or follow domain-specific reasoning patterns. A medical coding assistant that needs to output ICD-10 codes in a precise structured format benefits from fine-tuning. A customer support bot that needs to answer questions from a 50,000-page knowledge base updated daily does not — that's a RAG job.

Building the RAG Pipeline: Stage by Stage

Most failures in production RAG are pipeline failures, not model failures. A mediocre LLM with well-retrieved context beats a state-of-the-art LLM given garbage chunks. Spend your engineering time on the retrieval side.

Chunking: The Overlooked Foundation

Chunking is how you split source documents into pieces small enough to embed meaningfully but large enough to carry coherent context. Fixed-size chunking (e.g., 512 tokens, 50-token overlap) is the starting point, but it breaks badly at section boundaries. Semantic chunking — splitting on paragraph breaks, heading structure, or sentence-boundary detection — preserves meaning better. For structured documents like PDFs and spreadsheets, consider tools like Anara, which handles multi-format document ingestion with layout awareness baked in. The rule of thumb: your chunk size should roughly match the granularity of a self-contained fact or argument in your corpus.

Embeddings: Turning Text into Search

An embedding model converts each chunk (and each query) into a dense vector. Semantic similarity between query and chunk becomes a distance calculation in that vector space. The MTEB leaderboard is the standard reference for comparing embedding models on retrieval benchmarks. OpenAI's text-embedding-3-large, Cohere's Embed v3, and open-weight models like bge-large-en-v1.5 all perform well depending on your latency and cost constraints. Critically, use the same embedding model at indexing time and query time — a mismatch silently breaks retrieval.

Vector Stores: Where the Index Lives

The vector store holds your embeddings and serves approximate-nearest-neighbor (ANN) queries fast. Pinecone, Weaviate, Qdrant, pgvector, and ChromaDB are the most common choices. For small corpora under a few hundred thousand chunks, pgvector on an existing Postgres instance is often sufficient and avoids operational overhead. At scale, dedicated vector databases with HNSW indexes and filtering support earn their complexity. Always store the original chunk text alongside the embedding — you'll need it to assemble the final prompt.

Reranking: Rescoring the Retrieved Set

ANN search retrieves candidates fast but imprecisely. A reranker — typically a cross-encoder model like Cohere Rerank or a fine-tuned BERT variant — scores each retrieved chunk against the query more carefully and reorders the set. This two-stage approach (fast ANN retrieval, slow cross-encoder reranking) consistently outperforms single-stage retrieval in production. The performance uplift is especially pronounced on longer, more nuanced queries. Reranking adds latency (30-100ms typically), but the quality improvement justifies it for most customer-facing applications.

LLM Synthesis: Turning Context into Answers

The final stage is prompt construction and generation. Pass the top-k reranked chunks as context, include the user's query, and add explicit instructions about how to handle cases where the context is insufficient — "if the answer is not in the provided documents, say so" is not optional, it's load-bearing. Prompt length matters: if you cram 20 chunks into a 128k context window, the LLM may still miss facts buried in the middle due to the lost-in-the-middle phenomenon documented in Liu et al. (2023). Three to five highly relevant chunks often outperform twenty loosely relevant ones.

Common Pitfalls That Kill Production RAG

Prototype RAG is easy to build. Production RAG is where assumptions collapse. Here are the failure modes that show up repeatedly.

Query-Document Mismatch

Embeddings are trained on a distribution of text. If your documents are highly technical and your users ask casual questions (or vice versa), the embedding space may not bridge the gap well. HyDE (Hypothetical Document Embeddings) — generating a hypothetical answer first, then embedding that — is one mitigation. Query expansion using an LLM to reformulate the question into multiple variants is another. Both add latency and complexity, so profile first to confirm retrieval is actually your bottleneck before adding either.

Stale Indexes

Documents get updated. If your indexing pipeline doesn't track document versions and re-embed changed chunks, the vector store drifts from the source of truth. Build document-level change detection (hash comparison, webhook triggers, or scheduled diffs) into your pipeline from day one. Retrofitting it after launch is painful. This is also where AI-powered document management tools, like those covered in our roundup of the best document management AI tools, can handle ingestion and versioning as a service rather than a custom build.

Ignoring Retrieval Evaluation

Teams evaluate their RAG system end-to-end (does the final answer look right?) without ever measuring retrieval quality independently. This makes debugging impossible. Build a retrieval evaluation set: questions with known relevant chunks. Measure recall@k and mean reciprocal rank before you ship. If retrieval quality is low, no amount of prompt engineering on the synthesis stage will fix it.

Over-chunking and Under-chunking

Chunks that are too small strip away the surrounding context that makes a fact meaningful. Chunks that are too large dilute the embedding signal and bloat the prompt. There's no universal correct chunk size — it depends on your document structure. Run offline experiments with your actual corpus rather than copying defaults from a tutorial written for a different dataset.

Security and Data Leakage

In multi-tenant systems, a user's query must only retrieve documents they're authorized to access. Vector store metadata filters are the standard mechanism — every chunk should carry a tenant or permission tag, and every query should include a filter clause. Failing to enforce this at the retrieval layer means a prompt injection attack or a malicious query could surface another user's private data. This is not a hypothetical edge case; it's a documented attack class. If you're building production apps with embedded AI and need robust access control patterns, the Retool AI review covers how enterprise-grade app platforms handle permissioning around AI components.

Evaluating a RAG System End to End

Evaluation is where most teams underinvest. A useful framework breaks quality into three components: retrieval faithfulness (did we surface the right chunks?), answer faithfulness (is the generated answer grounded in the retrieved context, not hallucinated?), and answer relevance (does it actually address what the user asked?). Frameworks like RAGAS provide automated metrics for all three. Human evaluation remains essential for catching failure modes that automated metrics miss — especially tone, completeness, and edge cases in technical domains.

Building a Ground-Truth Test Set

Start with 50 to 100 question-answer pairs that cover your core use cases. Include adversarial examples: questions whose answers are not in the corpus (the system should abstain), questions that span multiple documents (the system must synthesize), and ambiguous queries. A test set this size catches most regressions without requiring a large annotation budget. Expand it over time using real user queries flagged for review. Note-taking and knowledge management tools — see our coverage of the best note-taking and knowledge AI tools — can help teams organize and annotate evaluation datasets without a bespoke internal tool.

Architecture Patterns Worth Knowing

Beyond the basic pipeline, several patterns have become standard in serious production systems.

Hybrid Search

Pure vector search misses exact keyword matches that BM25 (sparse retrieval) handles well. Hybrid search runs both in parallel and merges results using reciprocal rank fusion. The combination consistently outperforms either approach alone, particularly on domain-specific queries involving product names, codes, or proper nouns.

Agentic RAG

In agentic setups, the LLM decides whether to retrieve, which queries to issue, and whether the retrieved context is sufficient or requires a follow-up retrieval step. This handles multi-hop questions — "what did our contract say about penalty clauses, and how does that compare to the industry standard?" — that a single-shot retrieval cannot answer cleanly. The tradeoff is latency and complexity. Agentic RAG is worth the investment for reasoning-intensive use cases; it's overkill for simple Q&A.

Caching

Semantic caching stores recent query-answer pairs and returns cached results for semantically similar incoming queries. This cuts latency and cost dramatically for high-volume systems where many users ask equivalent questions. Implement it as a layer in front of the retrieval pipeline, not after — you want to skip the full pipeline on a cache hit.

Retrieval-augmented generation has moved from research curiosity to table stakes for any AI application that needs to work reliably on private or dynamic data. The pipeline is learnable, the tooling is mature, and the failure modes are well-documented — which means most of the hard work now is engineering discipline rather than research novelty. Get your chunking right, evaluate retrieval independently, enforce access control at the vector layer, and you'll avoid the mistakes that send most teams back to the drawing board after launch.