CloudflareAI

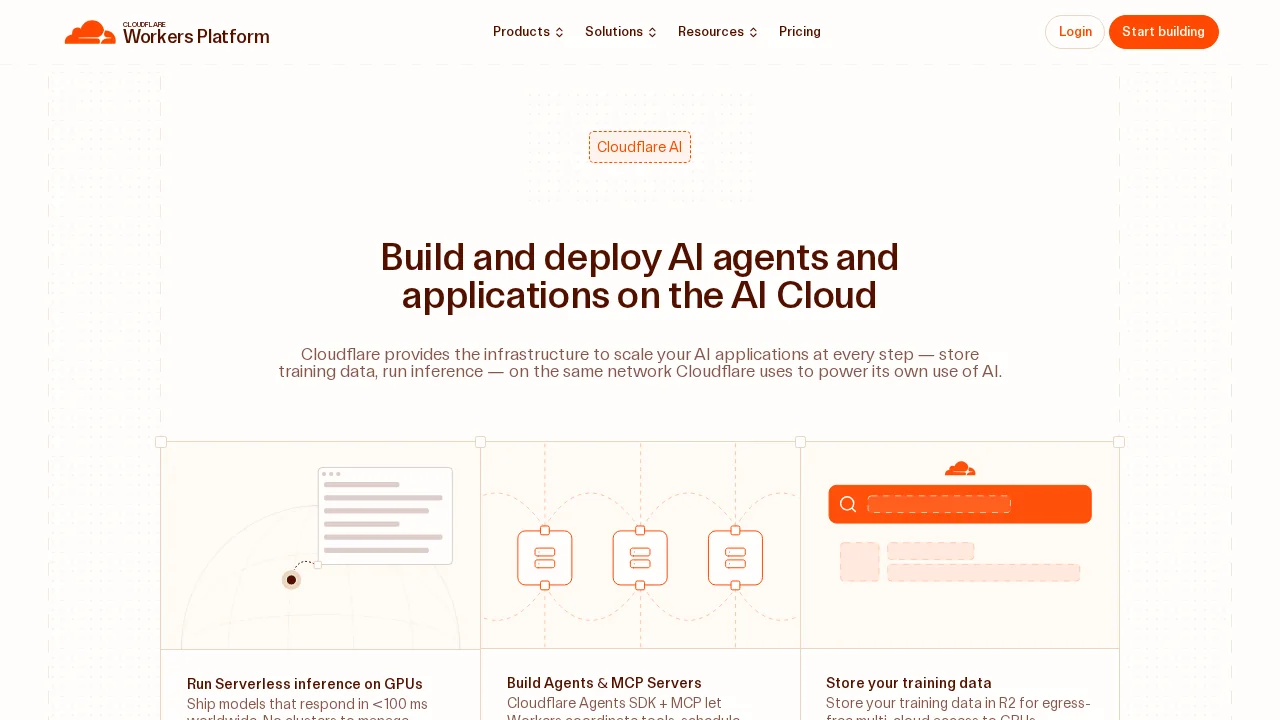

CloudflareAI enables developers to run fast, low-latency AI inference on global infrastructure with serverless GPU acceleration.

Screenshots

About CloudflareAI

CloudflareAI brings machine learning inference capabilities directly to Cloudflare's global network, allowing developers to execute AI tasks at the edge with minimal latency. By running pre-trained models natively on Cloudflare Workers, applications can leverage GPU-accelerated computing without managing infrastructure or worrying about scaling issues. The platform supports multiple deployment options—Workers, Pages, or REST APIs—making it flexible for diverse application architectures.

The service includes a curated catalog of production-ready models covering common AI tasks like image classification, sentiment analysis, speech recognition, text generation, and translation. Developers can deploy models with just a few lines of code, choosing from templates backed by trusted AI organizations including Meta, Nvidia, Microsoft, Hugging Face, and Databricks. This reduces development friction and accelerates time-to-market for AI-powered features.

CloudflareAI's full-stack approach combines multiple tools for comprehensive AI workflows. Workers AI handles inference, while Vectorize provides globally distributed vector storage for embeddings and semantic search capabilities. AI Gateway adds production reliability through caching, rate limiting, and analytics. For teams handling large model training, R2 object storage offers cost-effective, multi-cloud compatible storage without surprise billing concerns.

The platform emphasizes cost efficiency and reliability, enabling developers to build secure, scalable AI applications without vendor lock-in. By distributing inference across Cloudflare's global edge network, applications achieve better performance and availability than traditional centralized approaches while maintaining predictable pricing models.

Pros

Cons

Alternatives to CloudflareAI

AppDeploy

Rocket

biela.dev

Momen | Vibe Architect

Sketchflow.ai

ThinkRoot - The AI Compiler

Atoms