OpenRouter

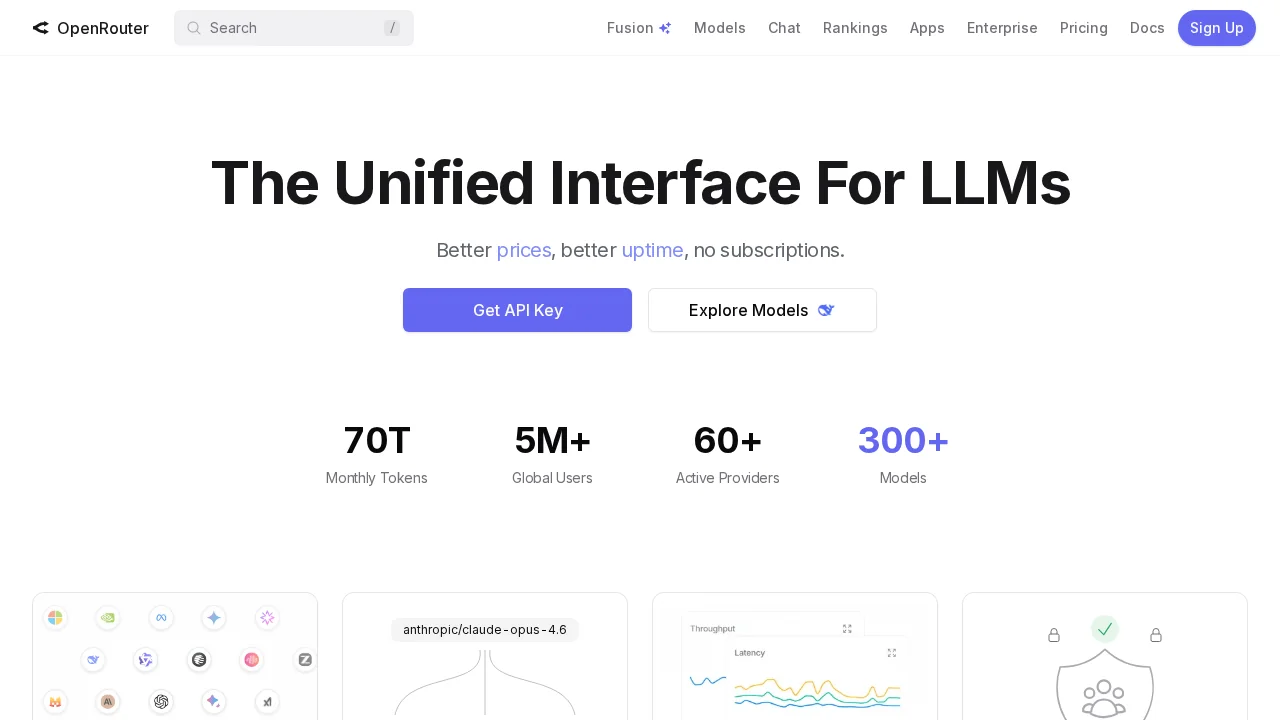

OpenRouter is a unified LLM interface that lets you compare, access, and use multiple AI models from one platform.

Screenshots

About OpenRouter

OpenRouter simplifies AI model selection by providing a centralized platform where you can browse, compare, and deploy language learning models without managing individual subscriptions. The tool displays pricing, performance metrics, and parameter counts side-by-side, enabling you to find the most cost-effective and capable model for your specific task. Whether you need advanced reasoning, coding assistance, or complex problem-solving, OpenRouter gives you instant access to diverse models including DeepSeek V3, Gemini Pro 2.5, and Mistral Small 3.1.

Beyond model discovery, OpenRouter functions as an interactive hub where you can test models through built-in chat interfaces and explore trending solutions within the AI community. This hands-on approach eliminates guesswork when selecting between thousands of available options. The platform emphasizes robust, high-parameter models designed for demanding applications, ensuring you can tackle sophisticated workflows without switching between multiple services.

OpenRouter also serves as a comprehensive information center for AI-related news and announcements, keeping you informed about model updates and industry developments. By consolidating model access, comparison tools, and community insights into one interface, OpenRouter reduces friction in your AI workflow and helps you stay current with rapidly evolving language model capabilities.

Pros

Cons

Alternatives to OpenRouter

Notis

remio: Your Personal ChatGPT

SureThing.io

TheLibrarian.io

Supernormal App

Base44 Superagents

Caret