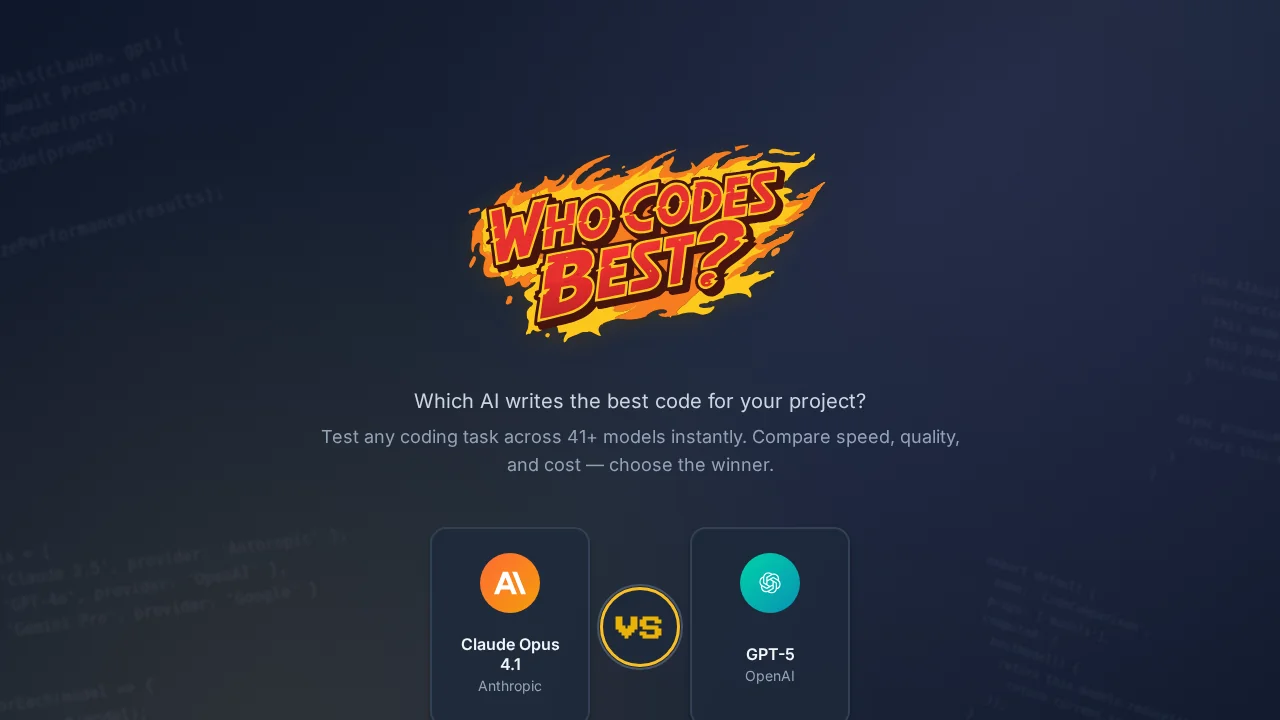

Who Codes Best?

Who Codes Best? benchmarks and compares AI coding assistants to help you find the best model for your development needs.

Screenshots

About Who Codes Best?

Who Codes Best? is a dedicated benchmarking platform that evaluates and compares the performance of leading AI coding assistants. By testing models like Claude 3.5, GPT-4o, and Gemini Pro across real coding tasks, the platform provides objective performance data to help developers and teams choose the right AI tool for their workflow.

The platform's core strength lies in its CodeComparison function, which runs identical coding challenges across multiple AI models simultaneously. Users can instantly evaluate performance across three critical dimensions: execution speed, code quality, and cost-effectiveness. This multi-factor analysis eliminates guesswork when selecting between competing AI coding solutions.

Beyond one-off comparisons, Who Codes Best? maintains a comprehensive directory of popular AI models and coding agents with ranked code samples generated by each. This allows users to review actual outputs and understand each model's strengths and weaknesses across different coding scenarios. The platform also curates news and updates about new AI model releases and benchmark results, keeping developers informed about the latest advancements in AI-assisted coding.

Whether you're evaluating AI coding assistants for personal projects, team adoption, or enterprise deployment, Who Codes Best? provides transparent, data-driven insights to support informed decision-making in an increasingly competitive AI landscape.

Pros

Cons

Alternatives to Who Codes Best?

CodeRabbit

Kilo | Code Reviewer

Verdent

Base44

Rocket

Pulse Editor: Vibe Code & Automate

Mocha