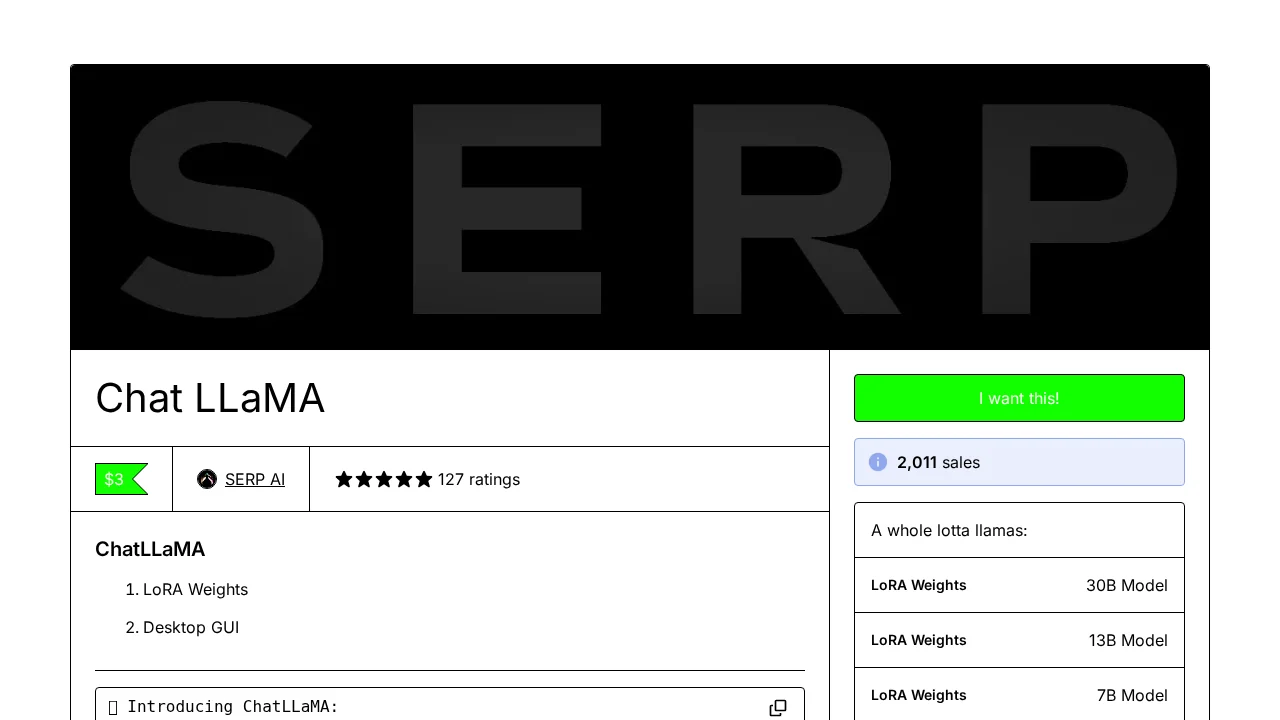

ChatLLaMA

ChatLLaMA lets you build custom AI assistants that run locally on your GPU with fine-tuned conversation quality.

Screenshots

About ChatLLaMA

ChatLLaMA empowers users to create personalized AI assistants that execute directly on GPU hardware, eliminating dependency on external services. The tool leverages LoRA (Low-Rank Adaptation) technology trained on high-quality conversational datasets, enabling natural dialogue modeling between AI and users. Available in three model sizes—7B, 13B, and 30B parameters—ChatLLaMA offers flexibility for different hardware capabilities and performance requirements.

The platform includes a desktop GUI that simplifies local deployment, making it accessible to users without deep technical expertise. Users can enhance their assistants by submitting custom dialogue-style datasets, which are incorporated into training to continuously improve conversation quality and relevance. An upcoming RLHF (Reinforcement Learning from Human Feedback) version will further refine response alignment with user preferences.

ChatLLaMA serves both individual users seeking private AI companions and developers building conversational applications. The community actively supports GPU resource sharing, allowing developers to access computational power in exchange for collaborative coding contributions. This creates an ecosystem where users benefit from improved models while contributors gain access to necessary infrastructure for AI development.

Pros

Cons

Alternatives to ChatLLaMA

88Agents

BabyMealBot

TalkWiz.ai

AccuChats

Site2CRM

Chattitude

Recall